The first thing you see when you exit the elevators of the topmost floor of the Whitney Biennial are two AI “paintings” by Holly Herndon and Matt Dryhurst, called xhairymutantx. The painting was commissioned by the Whitney for the Biennial, which makes sense, because of all the works in the Biennial, this one best represents this year's theme: “Even Better than the Real Thing.”

I was very excited when I saw Holly Herndon’s name on the roster of artists, having been a fan of her music for many years. I wasn't necessarily surprised— her music is quintessentially electronic and avant-garde, often juxtaposing ethereal, almost pagan sounds (some songs are inspired by sacred harp) with the extremely high-tech (a collaboration between several real people and AI-generated voices). I wasn't surprised to learn she researched platform policy for her PhD at Stanford.

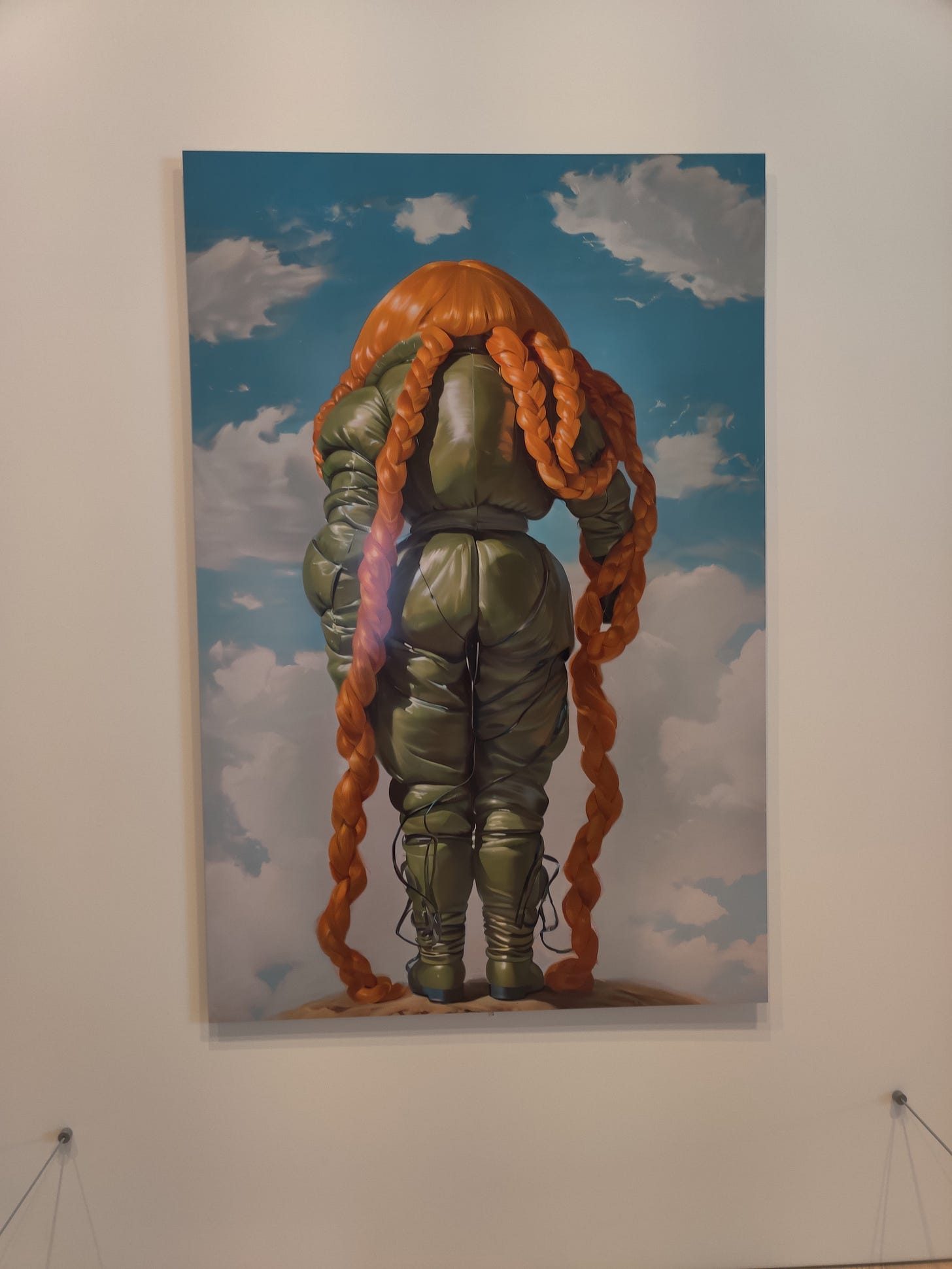

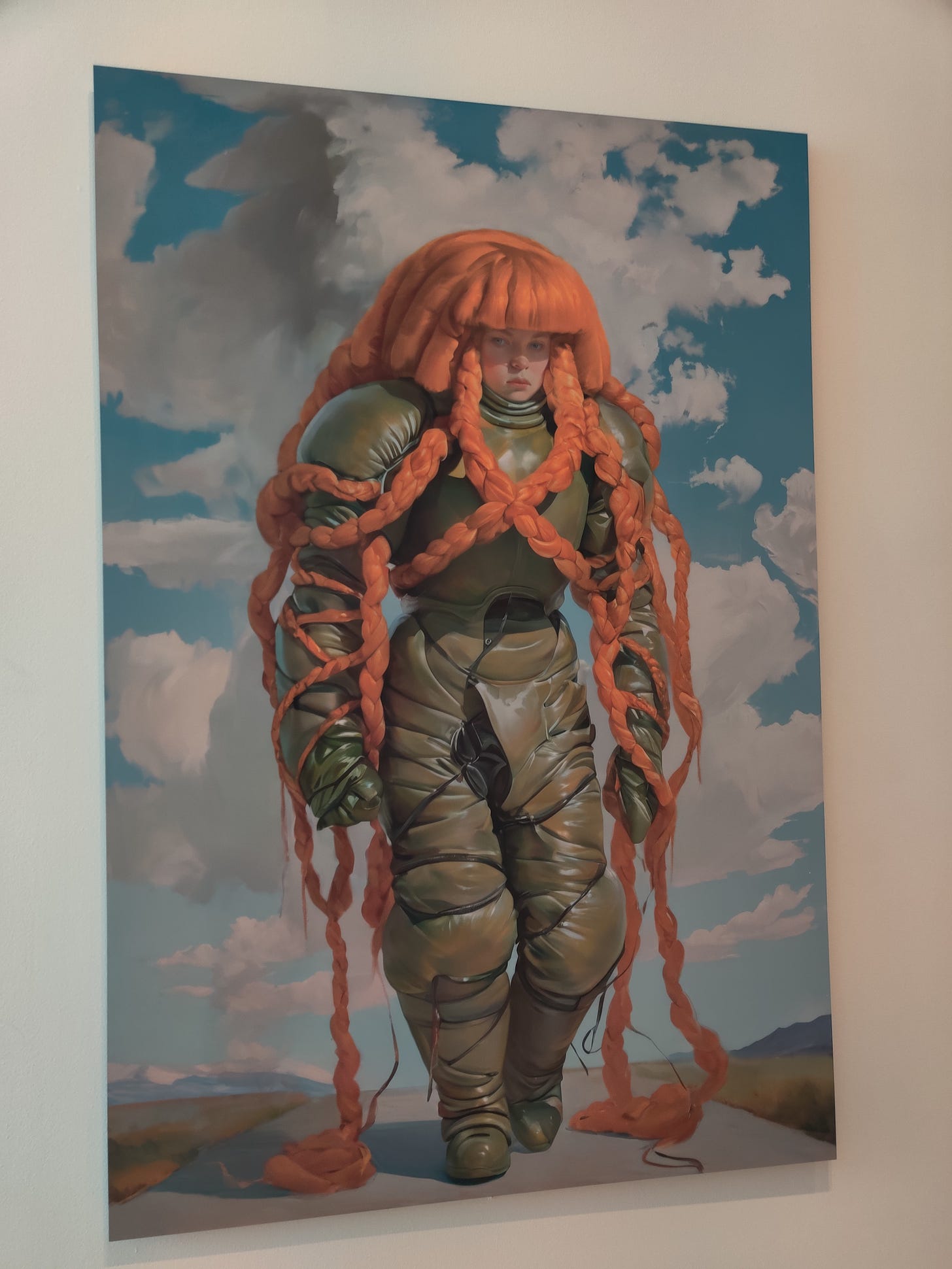

The collaborative piece she co-created for the Biennial is, on first glance, offensively ugly and quite stupid. As a fan of Herndon I recognized, vaguely, Herndon in the redhead astronaut monstrosity with pale skin and freckles. A quick read of the wall text elicits an, “ugh, what, yeah, no thank you” reaction. The art is not dissimilar from the faux-realistic high fantasy painterly style that has somehow become characteristic of most AI art (why, exactly, is the majority of art online like this? And by this, I mean quite this ugly). Yet I couldn't stop thinking about the image and reread the wall text:

“Here on artport, the artists have trained a text-to-image AI model on images of Holly that have been altered through costuming that distorts the artist’s body, and exaggerates her most noted feature, her hair, to transform her identity within AI models. No matter what text prompt is entered by the user, the results will generate a strange version of Holly. The new images are stored in the project gallery, thereby entering the internet at large and potentially becoming part of the data set behind new AI-generated images. Since AI programs view institutional websites like whitney.org as trusted sources, the artists play with the idea of using the Museum’s heft to influence the parameters of AI models, and to raise questions about the extent of self-determination possible with the internet today.”

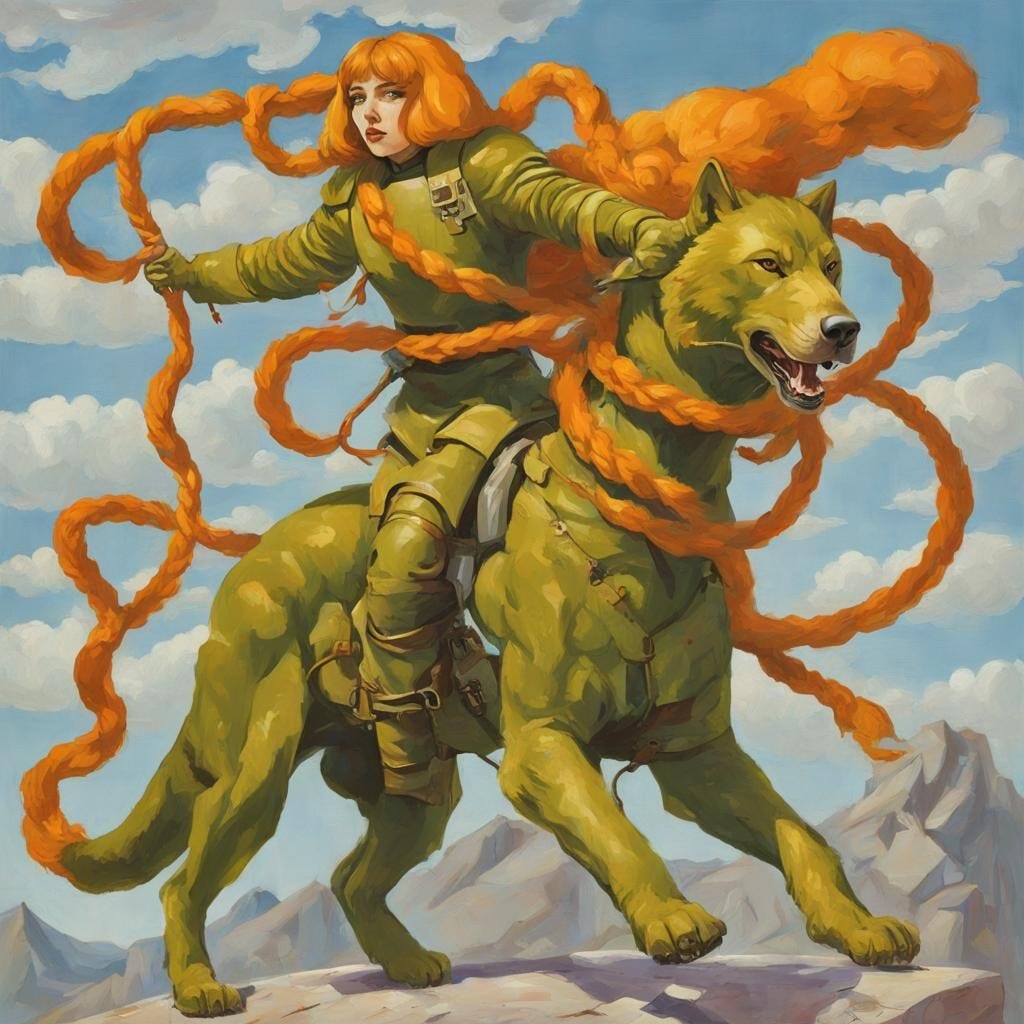

What's interesting about this piece is its fundamentally collaborative nature. Attendees can enter a text prompt into the website on the Biennial page for the piece, and this creates a new image. It seems that the Whitney prints new versions of the image as the new “versions” of Herndon proliferate, although it's not entirely clear how often this happens. Going online a few hours after the show, I input a prompt— “she-wolf destroying Silicon Valley and starting a communist revolution”— and received the following image, which was then integrated into the data set behind the piece:

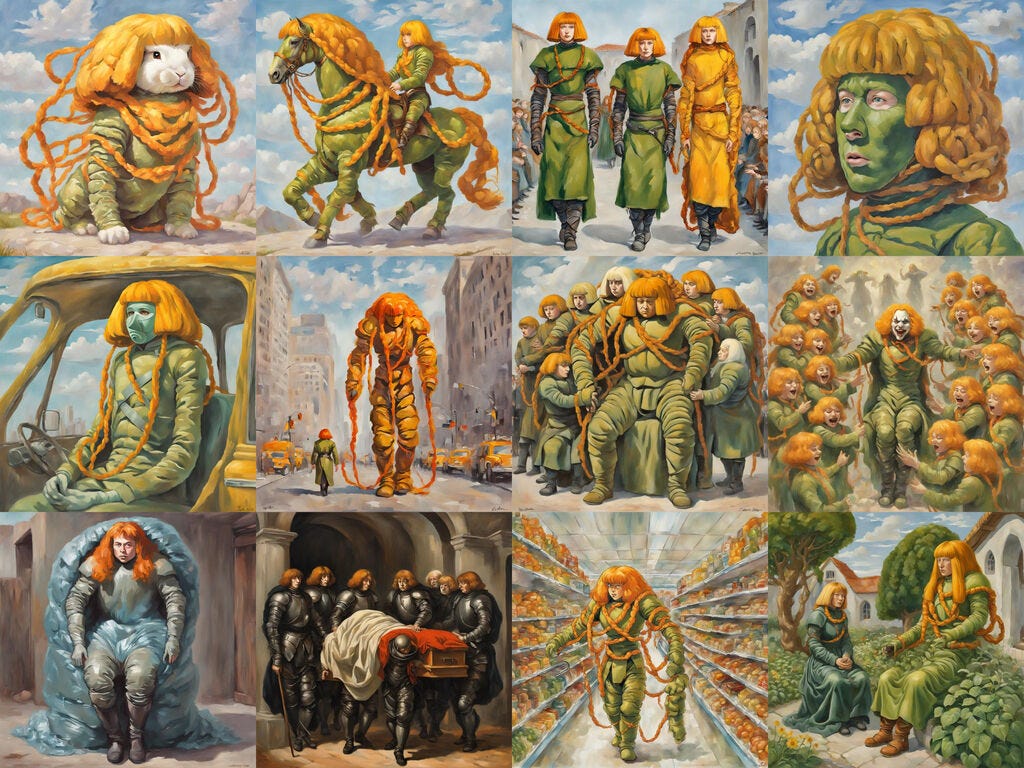

An improvement on the “original,” for sure, although all references to Silicon Valley or communist revolution were dropped (not to mention the various idiotic hallucinations typical of AI generated images). The website portrays a variety of user-collaborative images:

Ostensibly, the artwork is meant to investigate AI itself, to “raise questions” about the use of AI in a high-art context (and it doesn't really get higher than the Whitney Biennial, “the longest running survey of contemporary art in the United States.” It is also an attempt to control the future of AI, to refuse to ignore a world that is, as we speak, using it to cut labor costs. A quick Google of the Whitney produces, like any Google search these days, a dubious description:

I saw the show with one of my best friends, with us having overlapped in NYC for a day. We've been going to museums together for ages— literally since high school— and have both interned (unpaid, obviously) in prestigious art museums while in college. Since then I've become firmly ensconced into the dusty halls of academia, while she entered the Real Workforce (TM) and now works in market research— much closer to the world of tech than me. The piece prompted us to talk about AI, which has been affecting both of us at work. On my end, my end-of-term evaluations from students have shown, for the first time in my 12 years of teaching university students, positive responses to the question “has there been cheating?” Students knew, as I did, that AI was used to write discussion board posts, against course policy. On her end, her highers-up have no longer included copywriters in their budget, and my friend is expected to go above and beyond her job by feeding information to AI to have it write copy itself. AI, as most of us humanists all know, seems at first glance to save labor but in reality cuts jobs, creates more labor for the remaining workers, takes tremendous unsustainable energy, and, frankly, doesn't even work that well.

The more we thought about the Herndon piece, the more we liked it, but my skepticism of their methods remain. AI is impossible to control; it can't be tamed. Its use and existence puts the very idea of art-making in the 21st century under question (whether producing/selling high art for capitalists, or doodling for pleasure, or being an expert writer paid by corporations for skilled work while needing this money to make art you actually enjoy creating). On one hand, the fact that the Whitney brought this quandary to attention is useful, and good. The fact that the piece is ugly, and stupid, and so clearly at odds with the usual work shown at the Whitney, is good. The incontrovertible fact that the piece points, consciously or unconsciously, towards the fundamental stupidity of contemporary art and its inability of grappling with the crisis of the age, is good. But.

On the other hand, Herndon’s concept pointed to something that might be called the Stanford Industrial Complex, in which otherwise leftist (even Marxist) artists/humanists affiliated with the university create work that would be much more interesting if it weren't an apologia for tech (Stanford prof Jenny Odell’s otherwise great work is particularly guilty of this). I'm not a Luddite advocating for a crude return to pre-Internet technologies, but perhaps, just perhaps, a university in bed with Silicon Valley shouldn't be the one in charge of setting parameters for its use?

If more work at the Biennial played with the idea of AI perhaps it would have been useful to see a multitude of viewpoints and interactions. Unfortunately the other work tended, strangely, towards the hyper-sincere, leaving the Herndon appearing, despite its central placement, relatively detached from the rest. The result was a relatively uninspired constellation of work, with the exception of the stellar video pieces (especially by Isaac Julien, which I loved so much I wrote about it in the first draft of my book, and Ligia Lewis, which made me both giddy and dizzy, and fellow Penn/Center for Experimental Ethnography prof Sharon Hayes, whose piece surprisingly included a quirky leftist Rabbi I knew in LA!). Perhaps the way forward is not an outright rejection of the digital, nor its Herndon-esque embrace-as-critique, but something like its more playful use, while understanding its many and obvious limitations.